Notes on SfN 2018

Annual meeting of the Society for Neuroscience is the largest neuroscience symposium in the world connecting all branches of neuroscience research, from molecular biology of synaptic connections to high level modeling of brain function to behavioral psychology under the same roof. The attendance this year was 28,600 people. Multiple sessions are running in parallel and are organized into lectures, that are happening in the main hall, symposia, minisymposia, nonasymposia, and, of course, endless arrays of poster presentations. I was lucky to present our work on comparing deep convolution neural networks with human visual cortex at a nanosymposium on a very first day, which allowed me to roam freely everafter and attempt to take pictures and notes. I do not expect that this scribble will be of any particular use, but might give a general idea of the SfN 2018 event.

Annual meeting of the Society for Neuroscience is the largest neuroscience symposium in the world connecting all branches of neuroscience research, from molecular biology of synaptic connections to high level modeling of brain function to behavioral psychology under the same roof. The attendance this year was 28,600 people. Multiple sessions are running in parallel and are organized into lectures, that are happening in the main hall, symposia, minisymposia, nonasymposia, and, of course, endless arrays of poster presentations. I was lucky to present our work on comparing deep convolution neural networks with human visual cortex at a nanosymposium on a very first day, which allowed me to roam freely everafter and attempt to take pictures and notes. I do not expect that this scribble will be of any particular use, but might give a general idea of the SfN 2018 event.

Day 1

Music and the Brain conversation with Pat Metheny

Music and the Brain conversation with Pat Metheny

We all know the special kind of emotion and connection we develop with musical pieces. The emotion can be unreasonably strong and also create firm associative memories. That should be interesting from the point of view of neuroscience, why it is so strong, why the relation to memory?

Session on Vision: Representation of Objects and Scenes

This was the session where my presentation ![]() “Activations of deep convolutional neural networks are aligned with gamma band activity of human visual cortex” was assigned.

“Activations of deep convolutional neural networks are aligned with gamma band activity of human visual cortex” was assigned.

A general observation I made during this session was that out of 7 talks, 6 were relying on machine learning to either determine whether it is possible to differentiate between the experimental conditions by looking at the neural activity, or quantify the amount of information carried by neural activity. Only one of the presenters mentioned that they have attempted to analyze the coefficients of their logistic regression model and try to interpret them. Other works relied on machine learning as on a tool and that, in my mind, poses dangers, as machine learning models sometimes appear to work due to trivial defects in the data or the training process. Another reason to pay close attention to the resulting models and not treat them as black boxes is the insight such analysis can provide about the ways how a machine learning algorithm was able to decode the neural activity and which features of that activity it relied on.

g.tec Brain Computer Interface Workshop by Christoph Guger

g.tec Brain Computer Interface Workshop by Christoph Guger

g.tec continues to work on their EEG products like MindBEAGLE, but also started looking into ECoG as a modality that provides more reliable signal. Applications are, of course, limited due to invasiveness of the approach, but are justified in some cases.

Day 2

Bidirectional Interactions Between the Brain and Implantable Computers by Eb Fetz

Bidirectional Interactions Between the Brain and Implantable Computers by Eb Fetz

Electrophysiological neuroimaging allows to read out from the brain, electrical stimulation allows to affect its operation. The Neurochip technology developed by the Fetz Lab combines the two. It is an implantable and programmable device that reads out brain activity, performs on-chip computations on that input, and send the results of the computations back to cortex (or spine, or muscles). Exciting way to see it as an artificial piece of brain, that the brain can learn to incorporate into its normal activity. Could it be possible to extend mental capacities of primate and human brain?

[ Video ]

Relevant papers from their lab:

* Long-term motor cortex plasticity induced by an electronic neural implant, Jackson, Mavoori, Fetz, Nature 2006

* Direct control of paralysed muscles by cortical neurons, Moritz, Perlmutter, Fetz, Nature 2008

* Myo-cortical crossed feedback reorganizes primate motor cortex output, Lucas, Fetz, Journal of Neuroscience 2013

* Spike-timing dependent plasticity in primate corticospinal connections induced during free behavior, Nishimura et al., Neuron 2013

Other papers on the topic of bidirectional BCIs and closed-loop activity-dependent stimulation

* Restoring cortical control of functional movement in a human with quadriplegia, Bouton et al., Nature 2016

* Restoration of reaching and grasping movements through brain-controlled muscle stimulation in a person with tetraplegia: a proof-of-concept demonstration., Ajiboye et al., Lancet 2017

* Developing a hippocampal neural prosthetic to facilitate human memory encoding and recall Hampson et al., 2018

* Restoration of function after brain damage using a neural prosthesis, Guggenmos et al., PNAS 2013

* Rewiring Neural Interactions by Micro-Stimulation, Rebesco et al., Frontiers in System Neuroscience 2010

Neural Data Science: Accelerating the Experiment-Analysis-Theory Cycle in Neuroscience by Liam Paninski

Neural Data Science: Accelerating the Experiment-Analysis-Theory Cycle in Neuroscience by Liam Paninski

Field of neuroscience is in need of people who speak both neuroscience and statistics / machine learning.

Field of neuroscience is in need of people who speak both neuroscience and statistics / machine learning.

Techniques such as calcium imaging produce huge amounts of very rich data of neuronal activity.

Now is the time to put advanced computational and statistical techniques to use to make most of that data.

Day 3

Session: Brain-Machine Interface

It looks like many people put “muscle-machine” interfaces under the “brain-machine”. Every second talk mentions deep learning as a better alternative to other decoding algorithms.

|

|

|

New Computational Perspectives on Serotonin Function by Zachary F. Mainen

New Computational Perspectives on Serotonin Function by Zachary F. Mainen

Serotonin is associated with happiness and is used in antidepressants.

(a) Reinforcement learning. How to choose actions that maximize the reward. Dopamine acts as broadcaster of reward prediction error. Does the serotonin act as a penalty signal? Experiments showed that if we give mice dopamine for being in a certain corner of the box — they perceive it as reward and stay in that corner. Same experiments with serotonin — no effect, rewarding or penalizing. In summary it seems that serotonin does not have a reinforcement role. In other experiments serotonin caused mice to walk less and they were resting more often. Phasic activation of serotonin decreased speed of movement. Unlearning behaviors seems to be catalyzed by serotonin, without it it takes 2 times longer to unlearn action responses to specific stimuli. Dopamine is “pencil”, serotonin is “eraser”? Serotonin increases the speed of change? It seems that serotonin plays a role of adaptive learning learning rate, regulating the speed of change of behavior patterns.

(b) Bayesian learning. If serotonin sets the learning rates, how it connects to uncertainty. In experiments serotonin signals resembled uncertainty signals: high when task and response were reversed to confuse the animal.

(c) Control theory. Difference between expectation and evidence can be seen as uncontrollability of the control system. Role of serotonin for control feedback is backed by experimental work. Uncontrollable stress leads to much higher serotonin levels than controllable one. If serotonin slows us down, does it mean that in stressful situation it pushes us to wait and not press on? Adding serotonin allows mice to be more patient in a waiting experiments. Also makes mice more persistent in attempting again and again. Further experiment showed that expression of 5HT2c leads to persistence — animals are trying harder. Expression of 5HT2a leads exploration and being more flexible and patient. Serotonin signals the failure to achieve the goals and depending on 5HT2c/a expression regulates what is the response: try harder or wait and ignore.

Human Cognition and Behavior: Human Long-Term Memory: Encoding and Retrieval

|

From Nanoscale Dynamic Organization to Plasticity of Excitatory Synapses and Learning by David W. Tank

From Nanoscale Dynamic Organization to Plasticity of Excitatory Synapses and Learning by David W. Tank

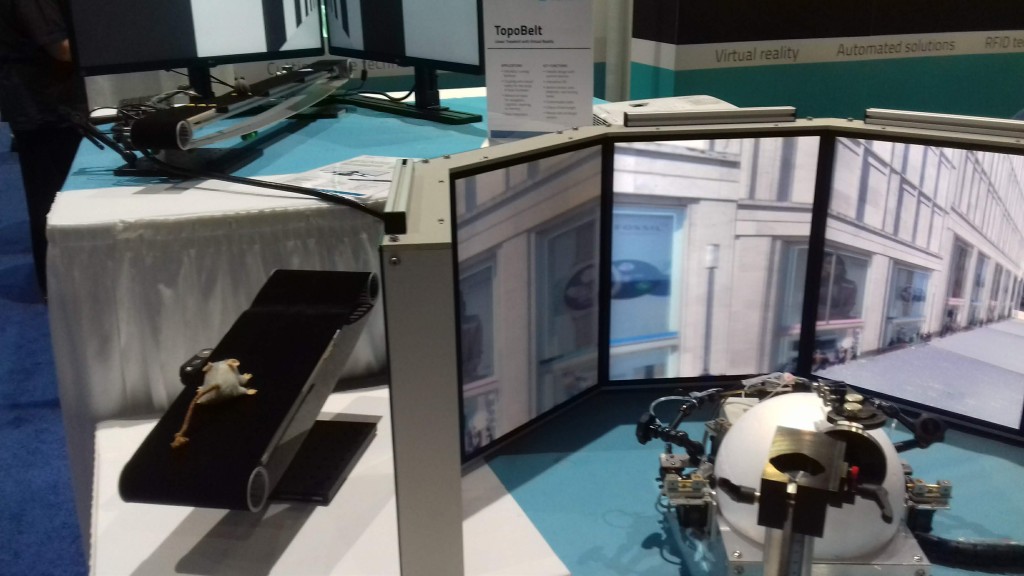

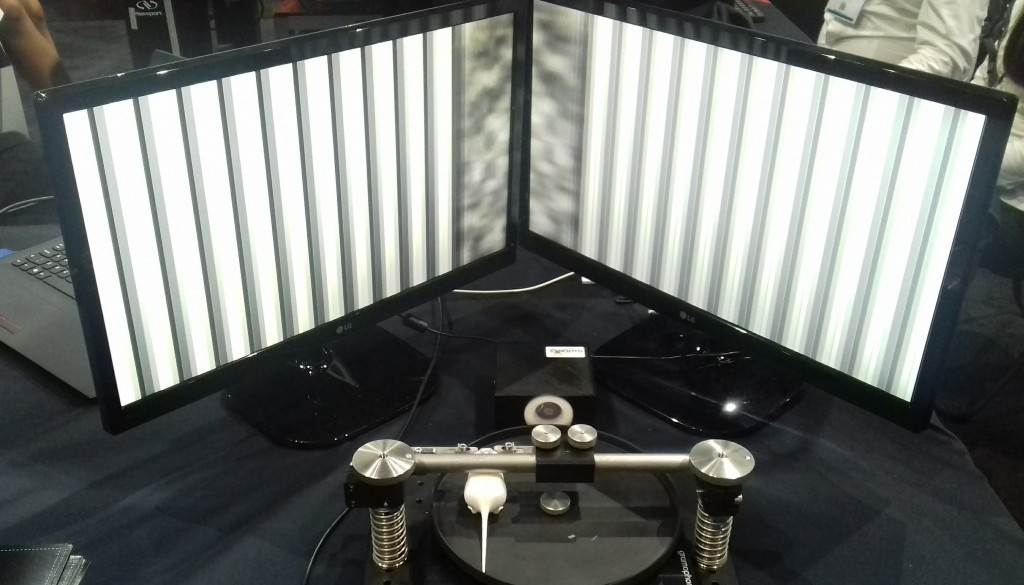

Experiments with rats on a spherical treadmill in VR environment. Trained a rat as an RL agent to run towards rewards. Identified population of “reward cells” that became active as rat approached the location where reward is expected to be.

Nice way to visualize sequences via “neural trajectories” — traces of activity in the space where each dimension is one neuron. Can be dimensionality-reduced to 2D or 3D for visualization purposes. Since trajectories represent different behaviors one could visually discern between the behaviors based on trajectory visualizations.

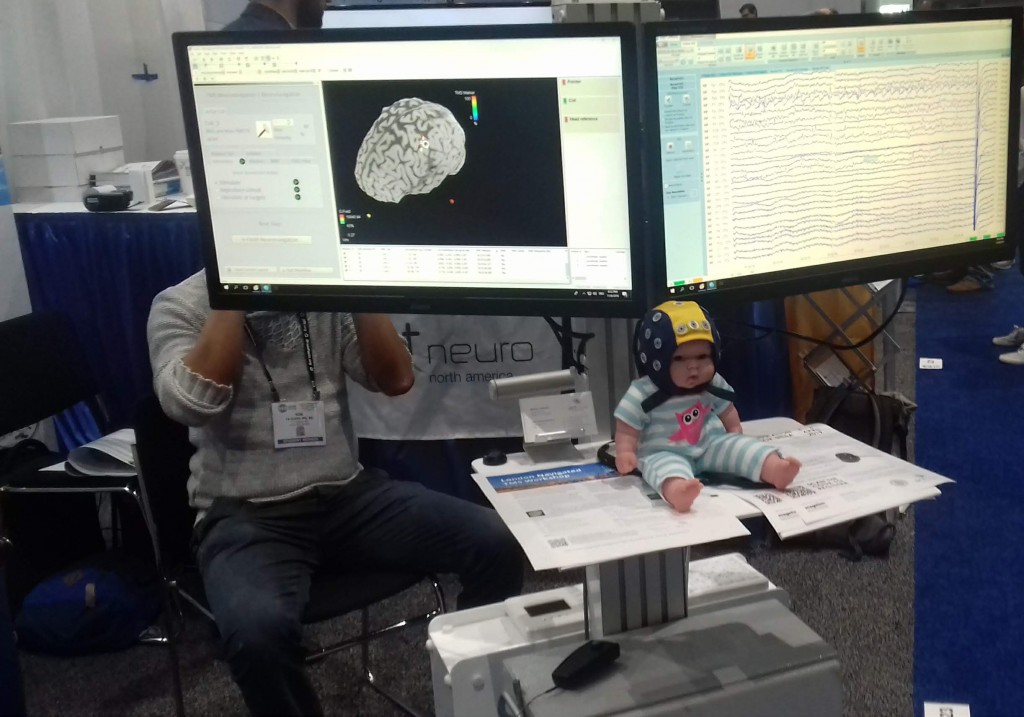

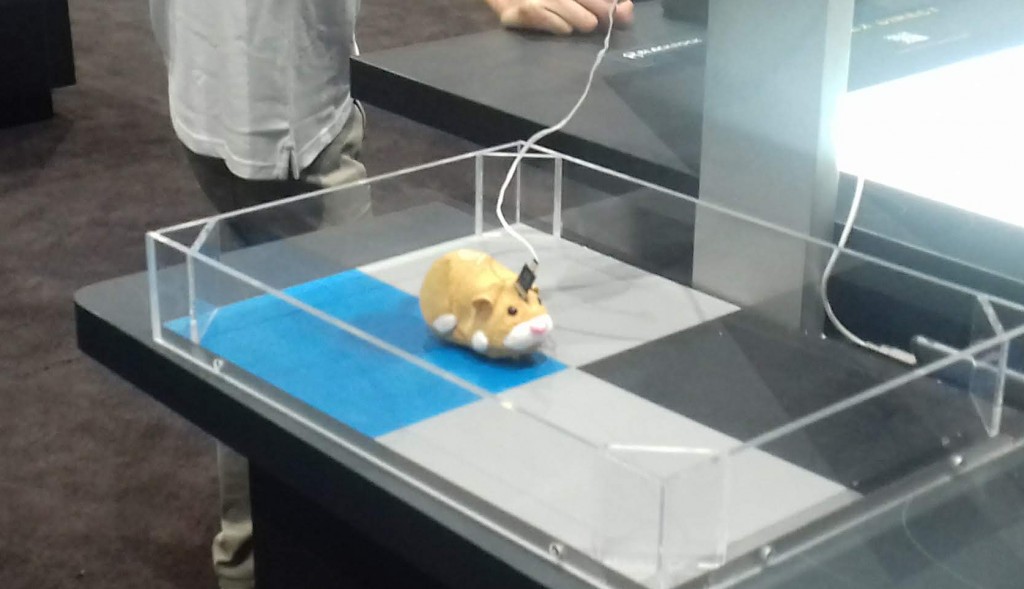

EXPO

Microscopes, electrophysiological equipment, surgical tools, tools I do not know the purpose of, weird contraptions for animal experiments, I will never look at plush animals the same way again, VR for rats, brain tissue on demand…

Day 4

The Genetics, Neurobiology, and Evolution of Natural Behavior by Hopi E. Hoekstra

The Genetics, Neurobiology, and Evolution of Natural Behavior by Hopi E. Hoekstra

Observing behavior of mice led to realization of behavior difference between two closely related species. Comparing their genome allowed to pinpoint that the difference in parental behaviors correlates with the expression of specific genes. To the question of genotype vs phenotype in behavior.

Human Cognition and Behavior: Human Long-Term Memory Representations: Network and Circuit Mechanisms

|

|

|

Deciphering Neural Circuits: From the Neuron Doctrine to the Connectome by Marina Bentivoglio

Deciphering Neural Circuits: From the Neuron Doctrine to the Connectome by Marina Bentivoglio

Very nice talk about history of neuroscience. Story of rivalry between Santiago Ramón y Cajal and Camillo Golgi.

From Salvia Divinorum to LSD: Toward a Molecular Understanding of Psychoactive Drug Actions by Bryan L. Roth

From Salvia Divinorum to LSD: Toward a Molecular Understanding of Psychoactive Drug Actions by Bryan L. Roth

A fascinating story of the research on Salvia Divinorum. Basically Daniel Siebert was crazy enough to test neural blockers to figure out which chemical is the core active component of Salvia Divinorum. Another important message from the talk is that after the period of controversy the research on psychedelic substances is recovering its place, which is good news because this is the only way we can explore altered states of consciousness and mind empirically.

Day 5

Animal Cognition and Behavior: Learning and Memory: Cortical-Hippocampal Interactions II

|

|

|

The Basal Ganglia: Beyond Action Selection

|

|

Conclusions

SfN is overwhelming, but worth attending on multiple levels! Machine learning is being used by neuroscientists as lot, but mostly as a tool. Area of brain-computer interfaces is alive and might bloom from connection to AI and reinforcement learning.

No comments yet.