Comparing human visual cortex with artificial systems of vision in Nature’s Communications Biology!

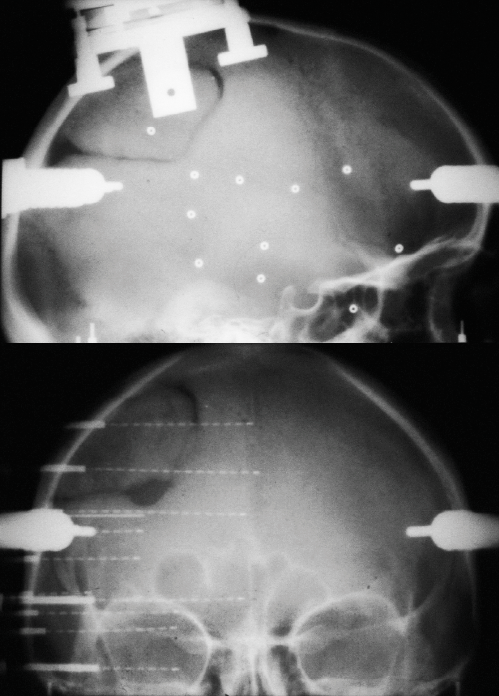

We had a cool dataset from 100 patients who looked at some pictures while wearing intracranial electrodes inside of their brain.

We had a cool dataset from 100 patients who looked at some pictures while wearing intracranial electrodes inside of their brain.

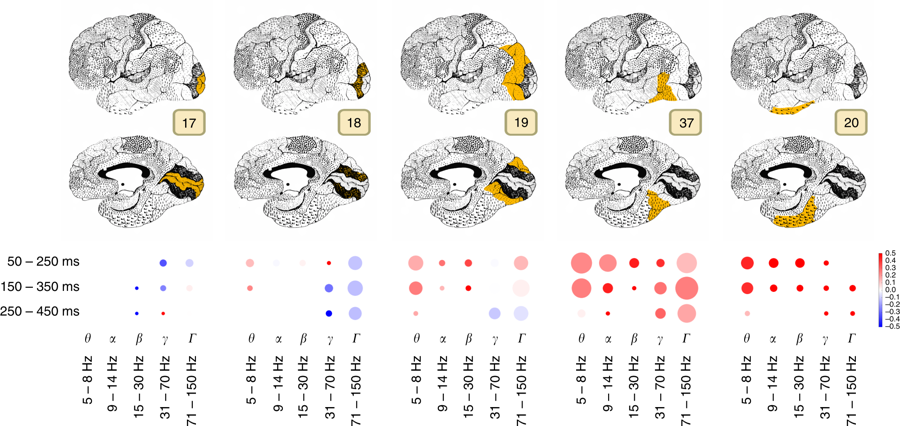

With these electrodes we’ve recorded how human brain reacts to natural images from eight categories: animals, houses, etc.

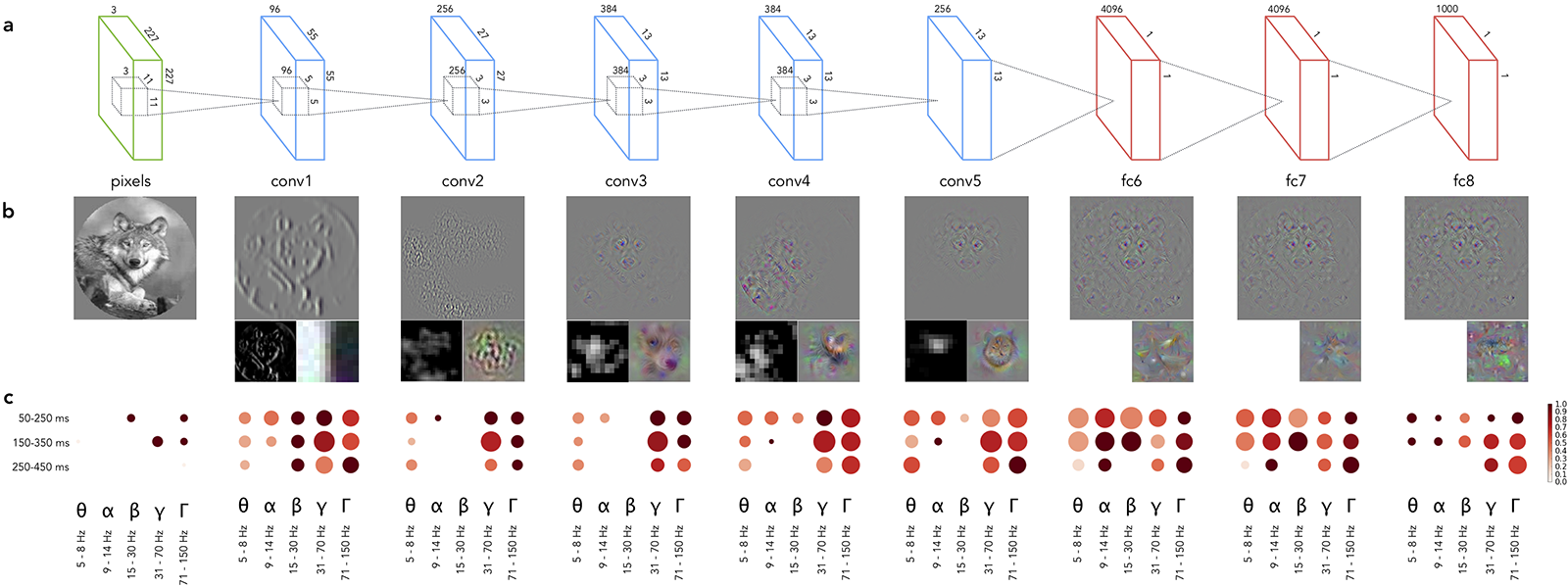

Then we showed the same images to a Deep Convolutional Neural Network that was trained to recognize objects on the images and, same as with humans, recorded how the artificial brain reacted to those images.

And sure enough we went and compared those activations. We have confirmed the similarities between the hierarchies of biological and artificial systems of vision, identified what kind of brain activity matches this hierarchy the closest and did a couple of other interesting observations along the way.

See our Nature Communication Biology paper for the details: https://www.nature.com/articles/s42003-018-0110-y

Code and (part of) data are public: https://github.com/kuz/Human-Intracranial-Recordings-and-DCNN-to-Compare-Biological-and-Artificial-Mechanisms-of-Vision

No comments yet.